The Impact of Query Fan-Out on B2B SaaS and Agency SEO

Mastering Query Fan-Out: The Enterprise Playbook for AI Search Dominance

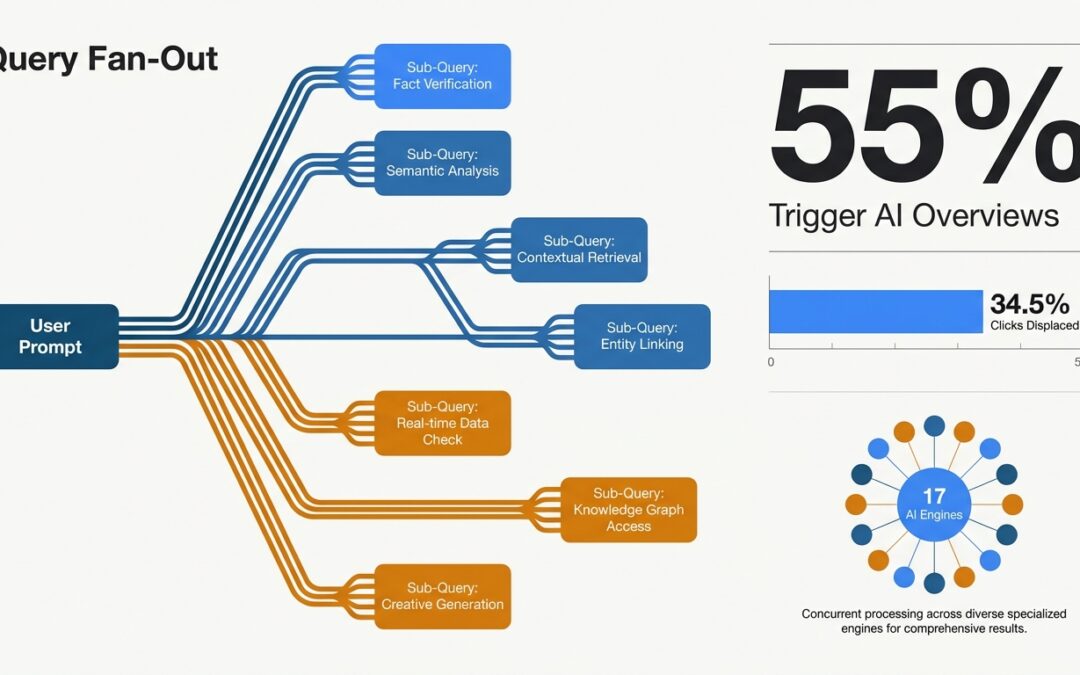

Your brand’s narrative is no longer controlled by your website; it’s being synthesized, fragmented, and re-assembled by AI. Query fan-out is the mechanism driving this shift, where a single user prompt is deconstructed into multiple, parallel sub-queries to build a comprehensive answer. For US enterprise SEOs and marketing directors, this isn’t a future trend—it’s the present reality. With an estimated 34.5% of traditional search clicks already being displaced by AI summaries, mastering this multi-threaded logic is the only way to prevent catastrophic visibility loss and ensure your brand is cited as the authority across more than 17 different AI engines.

The age of linear search is over. Today, 55% of all searches can trigger AI Overviews, where engines don’t just link to sources—they synthesize complex answers from scattered data points. This technique, known as query fan-out, is designed to solve the user’s core problem, not just match their keywords [12]. Relying on traditional keyword volume and rank tracking is now a strategy for obsolescence.

To maintain and grow market share, enterprise brands must move from a defensive posture to an offensive strategy. This guide provides that playbook. We will dissect the core mechanics of query fan-out, introduce a four-pillar framework for enterprise execution, and detail the critical server latency and cross-engine variations that determine success or failure. We will also demonstrate how to transition your teams from static reporting to automated, revenue-driving workflows, securing your visibility in the new AI-driven ecosystem.

Author: Pedro Spota, Director of Growth at ELOGIA (Viko Group) & Co-Founder of AI Rankia.

Bio: Pedro orchestrates a team of 200+ AI agents focused on marketing, sales, and SEO, leveraging advanced frameworks and platforms like n8n to optimize conversion lift and lower cost-to-serve.

LinkedIn: https://www.linkedin.com/in/pedrospota

Transparency Disclosure: This guide was developed using proprietary intent data from AI Rankia’s tracking of 17+ global AI models, combined with analysis of Google’s official patents (US20240289407A1) and enterprise SEO case studies.

>

> * What is Query Fan-Out? It’s an AI process where one user query generates 5-15 hidden, parallel sub-queries to gather diverse information before creating a summary. Your content is judged against these hidden queries, not the user’s original input.

> * The Impact:

The 4-Pillar Solution for AI Search Dominance

Comprehensive Topic Mapping

Move beyond keywords to discover and target the hidden sub-queries AI engines use to build answers.

Infrastructure Optimization

Ensure server response times are under 800ms to be considered a viable source during AI retrieval.

Multi-Engine Alignment

Create robust content that satisfies the different retrieval logics of Google, ChatGPT, Perplexity, and others.

Automated Execution

Implement programmatic workflows to turn fan-out analysis into actionable content briefs at enterprise scale.

Traditional SEO is obsolete. Success is no longer about ranking #1 but about being cited as an authoritative source within AI-generated answers. Failing to adapt means losing control of your brand narrative and ceding traffic to competitors.

> * The 4-Pillar Solution: Dominance requires a new strategy based on:

> 1. Comprehensive Topic Mapping: Discovering and targeting the hidden sub-queries.

> 2. Infrastructure Optimization: Ensuring server response is under 800ms to be considered by AI.

> 3. Multi-Engine Alignment: Creating content that satisfies the different logics of Google, ChatGPT, Perplexity, and others.

> 4. Automated Execution: Using programmatic workflows to turn insights into actionable content briefs at scale.

> * The Critical Metric: Shift focus from “rankings” to “citation rate.” The goal is to become the most frequently cited source for your core business topics across all major AI platforms.

The Core Mechanics of Query Fan-Out

At its heart, query fan-out is the process where an AI engine receives one user prompt and instantly generates multiple synthetic query-document pairs to gather comprehensive, multi-faceted context before synthesizing an answer. This represents a fundamental shift from linear search to multi-threaded LLM retrieval, a concept Google has explored for years in patents like “Thematic Search,” which refers to these sub-queries as “themes” [2]

.

Think of it like briefing a team of expert researchers. Instead of giving one researcher a single question, you give them the core topic and ask them to explore every relevant angle—cost, benefits, implementation, alternatives, and common problems. The final report is a synthesis of all their findings. Query fan-out is the AI’s version of this process, executed in milliseconds.

🎯 Assign a Core Topic

Instead of a single question, the AI is given a core topic to explore.

🔍 Explore All Angles

The AI generates multiple sub-queries to investigate cost, benefits, alternatives, and problems.

📝 Synthesize Findings

The information from all sub-queries is consolidated into a single, comprehensive report.

💡 Deliver the Answer

The final synthesized answer is presented to the user in the AI Overview.

To understand this mechanism, we can look at Google’s patent US20240289407A1, which details a system using LLMs for “prompted expansion” [1][4]. Unlike traditional keyword expansion that finds simple synonyms (e.g., “car” and “automobile”), this system generates entirely new, diverse questions to explore a topic from multiple angles. As a result, your content is no longer judged against what the user typed, but against a multitude of LLM-generated queries that dive deeper into the web to verify facts and context [3]. Adhering to official guidelines for AI features is now a foundational requirement for visibility [5].

A practical application of this principle can be seen in how Navy Federal Credit Union structures its Auto Loans page [8]

✅ Case Study: Navy Federal Credit Union

The bank’s Auto Loans page successfully preempts AI sub-queries by using clear H2 and H3 headings like ‘New & Used Auto Loan Rates’ and ‘How to Apply’. This structure directly answers the AI’s fanned-out questions, making it a prime candidate for citation.

. The page uses clear H2 and H3 headings that directly mirror the likely sub-queries an AI would generate, such as “New & Used Auto Loan Rates,” “How to Apply for an Auto Loan,” and “Auto Loan Calculators.” This structure doesn’t just target keywords; it preemptively answers the AI’s fanned-out questions, making it a prime candidate for citation. This success pattern—structuring content to answer anticipated sub-queries—is a key takeaway for any enterprise team.

The Enterprise Playbook: A 4-Pillar Strategy for Fan-Out Dominance

Adapting to query fan-out requires a systematic, enterprise-grade strategy that goes far beyond updating a few blog posts. It demands a holistic approach encompassing topic modeling, infrastructure, content alignment, and automation. This four-pillar framework provides the blueprint.

Pillar 1: Comprehensive Topic Mapping

The first step is to abandon keyword research in favor of comprehensive topic mapping. The goal is to identify the entire universe of sub-queries an AI might generate for your core business topics.

Manual Method (for analysis, not scale):

Practitioners can use Chrome DevTools to monitor network requests while interacting with an AI. This reveals the exact synthetic queries being dispatched in real-time. Similarly, using APIs like Gemini’s Grounding API helps extract the specific entities the model associates with your topic.

The Enterprise Challenge:

Manual analysis is impossible to scale across thousands of product and service pages. Furthermore, industry analysis reveals the volatile nature of this process: only 27% of fan-out queries remain consistent across multiple runs of the same prompt [6]

. A staggering 95% of these fan-out phrases show zero monthly search volume in traditional tools, rendering them invisible to standard SEO platforms. This highlights the need for programmatic, continuous monitoring to discover and prioritize these hidden queries.

Pillar 2: Infrastructure & Latency Optimization

High-quality content is useless if it’s too slow for the AI to retrieve. Query fan-out involves 8-10 parallel queries executed in milliseconds. This creates a direct correlation between server latency and AI visibility.

A recent fast-tracked one-week experiment clearly demonstrated this impact [7]. Pages with server response times exceeding 800 milliseconds consistently failed to appear in AI Overviews, despite hosting relevant content. The LLM’s retrieval timeout window simply closed before the slow server could deliver its payload.

⚠️ The 800ms Latency Cliff

Content is rendered invisible to AI Overviews if the server response time (TTFB) exceeds 800 milliseconds. The LLM’s retrieval timeout window closes before a slow server can deliver its content, regardless of quality.

To solve this, enterprises must shift focus to backend infrastructure, specifically the trade-off between “fan-out on read” versus “fan-out on write” architectures.

Architecture for Speed: Read vs. Write

| Option | Pros | Cons | Score |

|---|---|---|---|

| Fan-Out on Read | Flexible; queries data sources in real-time. | Often too slow for AI retrieval due to high latency. |

4/10

|

| Fan-Out on Write | Delivers pre-computed, flat HTML almost instantly. | Less flexible; requires pre-computing and caching data. |

9/10

|

- Fan-Out on Read: Queries multiple data sources in real-time. This is flexible but often too slow for AI retrieval.

- Fan-Out on Write: Pre-computes and caches complex data relationships. This allows servers to deliver flat, fully rendered HTML almost instantaneously, ensuring your content is served before the LLM’s strict retrieval timeout closes.

At AI Rankia, we provide the infrastructure alignment strategies needed to ensure your servers can meet these demanding, multi-threaded speed requirements.

Pillar 3: Multi-Engine Content Alignment

A critical enterprise pitfall is optimizing exclusively for Google’s Gemini. This myopic focus ignores the fact that fan-out logic shifts dramatically across the 17+ AI models that shape user perception, including ChatGPT, Perplexity, and specialized international engines.

Consider the mechanical differences between the two dominant models:

AI Engine Logic: Gemini vs. ChatGPT

| Option | Pros | Cons | Score |

|---|---|---|---|

| Gemini Fan-Out | More structured, focusing on semantic relationships and entity extraction. Relies on established knowledge graphs. | More rigid and reliant on pre-trained thematic connections. |

7/10

|

| ChatGPT Fan-Out | Conversational, focusing on user context and follow-up intent. Favors recent, highly engaged content. | Highly variable output consistency, influenced by session history. |

7/10

|

| Feature | ChatGPT Query Fan-Out | Gemini Query Fan-Out |

|---|---|---|

| Query Generation Strategy | Conversational, focusing on user context and follow-up intent. | Structured, focusing on semantic relationships and entity extraction. |

| Data Source Preference | Tends to favor recent, highly engaged content (e.g., forums, social media). | Relies more heavily on established knowledge graphs and authoritative domains. |

| Output Consistency | Highly variable, influenced by prompt history and session context. | More rigid, relying on pre-trained thematic connections. |

A successful enterprise strategy requires creating content that is robust enough to satisfy these different retrieval logics simultaneously. This means building a unified content architecture that addresses the conversational queries of ChatGPT (“What are common problems people have with X?”) and the structured, entity-driven queries of Gemini (“Compare features of X vs. Y”) within the same asset. Tracking these cross-engine variations is essential for achieving global brand consistency.

Pillar 4: Automated Execution & Monitoring

Static rank trackers and manual spreadsheets are obsolete. Winning in the AI era requires a transition from passive monitoring to automated, prioritized action plans. The core challenge for enterprises is that manual analysis of fan-out gaps is not scalable.

The modern workflow is: Query > Fan-Out > Retrieval > E-E-A-T > Citation. To operationalize this, teams must automate the process of turning insights into action.

This is where programmatic workflows become essential. Here’s a practical example:

- Data Ingestion: After generating a fan-out analysis with a programmatic tool [9], the raw data (a list of high-priority, uncited sub-queries) is sent to a workflow automation platform like n8n via a webhook.

- Automated Brief Generation: The workflow prompts an LLM to generate detailed content briefs for the content team, based on the identified gaps.

- Structured Mandates: These briefs explicitly mandate that writers mirror the identified synthetic sub-queries in H2 and H3 tags, ensuring the content structure perfectly aligns with the AI’s retrieval logic.

This closed-loop system transforms complex gap analysis into actionable tasks, systematically increasing the likelihood of being cited as a trusted source and providing a measurable return on AI optimization efforts.

US Local AI Search & Geographic Fan-Out

A common misconception is that AI search is a purely global phenomenon. This view misses how sophisticated AI models actively append US-specific geographic modifiers and hyper-local context during the fan-out process. For enterprises operating in the US, this presents a significant opportunity.

Currently, 30% of keywords trigger AI Overviews in US SERPs, and AI Mode has surpassed 100 million active users in the United States. Crucially, research demonstrates that pages successfully ranking for multiple fan-out queries are 161% more likely to be cited in AI Overviews [10]

📊 The Citation Advantage

Research demonstrates that pages successfully ranking for multiple fan-out queries are 161% more likely to be cited in AI Overviews. This proves that success hinges on covering a topic comprehensively, not just targeting a single keyword.

.

Consider a geographic case study for a national B2B SaaS company. A user searching for “employee benefits software” might trigger a geographic fan-out. The LLM would generate localized sub-queries like “employee benefits laws in Texas” or “California paid family leave compliance.” To capture this traffic, enterprises must structure US-specific location pages to preemptively answer these geographic sub-queries. This targeted strategy, heavily utilized in AI Campaign Intelligence

, builds deep semantic coverage rather than relying on outdated keyword density. By creating localized content silos that explicitly address synthetic questions, brands can overcome generic global retrieval and become the most relevant answer for regional US searchers.

Frequently Asked Questions

What is the query fan-out method in AI search?

The query fan-out method is an AI process where a single user prompt is broken down into multiple, hidden sub-queries. Instead of a single linear search, the AI generates several synthetic questions to gather comprehensive data from various angles. It then synthesizes this fragmented information into a single, cohesive answer for the user.

How does query fan-out impact search rankings?

Query fan-out fundamentally redefines “ranking.” It shifts the focus from a single position for one keyword to being cited within an AI-generated answer. Success is measured by topical authority across dozens of AI-generated sub-queries. Pages that successfully answer multiple sub-queries are 161% more likely to be cited in AI Overviews [10].

What is the difference between traditional search and query fan-out?

The primary difference is that traditional search is linear and reactive, while query fan-out is multi-threaded and predictive. Traditional search matches the exact words a user typed. In contrast, fan-out anticipates the user’s broader informational needs by generating multiple related, synthetic queries to retrieve a more complete set of documents.

Does optimizing for query fan-out just mean adding an FAQ section?

No, simply adding an FAQ is insufficient. Optimization requires structuring your entire content asset to mirror the AI’s likely sub-queries, using clear H2 and H3 headings. As experts note, success requires proving trust through techniques like content chunking and vector checks. Simply listing related questions without logical hierarchy will fail to satisfy the AI’s structured retrieval algorithms.

How do we measure the ROI of optimizing for query fan-out?

The ROI is measured through a new set of metrics:

Measuring the ROI of AI Optimization

Citation Rate

The percentage of target queries where your brand is cited in the AI answer.

Share of Voice

Your brand’s visibility within AI summaries compared to competitors.

AI-Driven Traffic

Tracking clicks originating from links within AI Overviews and summaries.

Brand Sentiment

Monitoring how AI models portray your brand across relevant queries.

- Citation Rate: The percentage of target queries where your brand is cited in the AI answer.

- Share of Voice: Your brand’s visibility within AI summaries compared to competitors.

- AI-Driven Traffic: Tracking clicks from links within AI Overviews.

- Brand Sentiment: Monitoring how AI models portray your brand when answering relevant queries.

This requires setting up automated tracking for brand and query recommendations, a core component of a broader AI Reputation Management strategy [11].

Conclusion: From Ranking to Citation

Query fan-out has permanently dismantled the traditional search funnel. To survive the 34.5% drop in clicks and regain control of your brand narrative, US enterprises must adopt a new playbook. Success is no longer about ranking for keywords; it’s about being cited as the authority by AI.

This requires a strategic, four-pillar approach:

- Map the entire topic universe, not just keywords.

- Optimize server infrastructure for sub-800ms response times.

- Align content for the distinct logics of all major AI engines.

- Automate execution to scale your content strategy effectively.

Clinging to outdated SEO tactics will result in irreversible visibility loss. Stop relying on static reports and manual analysis. It’s time to transition to an automated, revenue-driving system that tracks 17+ AI models simultaneously and turns insights into action.

Book a demo · AI Rankia today to deploy automated n8n workflows for your AI citations and secure your brand’s position in the future of search.

References

[1] How AI Mode and AI Overviews work based on patents. https://searchengineland.com/how-ai-mode-ai-overviews-work-patents-456346

[2] How AI Search Platforms Expand Queries with Fan-Out and Why It …. https://ipullrank.com/expanding-queries-with-fanout

[3] What I Learned From Analyzing Google’s AI Mode Patent. https://moz.com/blog/analyzing-google-ai-mode-patent

[4] Query fan-out in Google AI mode. https://usehall.com/guides/query-fan-out-ai-mode

[5] AI Features and Your Website | Google Search Central. https://developers.google.com/search/docs/appearance/ai-features

[6] Query Fan-Out: A Misunderstood Concept in AEO & SEO. https://higoodie.com/blog/query-fan-out

[7] We Tested Query Fan-Out Optimization (Here’s What We …. https://www.semrush.com/blog/query-fan-out-experiment/

[8] Understanding Query Fan-Out and How it Impacts AI Search. https://www.conductor.com/academy/query-fan-out/

[9] Query Fan-Out in Practice: Turning One Search into an …. https://ipullrank.com/query-fanout-how-to

[10] Query Fan-Out Impact: Complete 2026 Guide to AI Search …. https://almcorp.com/blog/the-query-fan-out-impact/